Apache Hadoop YARN is a cluster resource manager which assigns, schedules, and monitors different functionalities in resource management. Amazon AWS uses Apache Hadoop YARN by default, by configuring Hadoop YARN’s Capacity on AWS EMR on Amazon EC2 increases cluster efficiency and solves the challenges over resource allocation, resource contention, and job scheduling in a multi-tenant cluster. In this post, we will go through Hadoop YARN, CapacityScheduler, and AWS EMR on Amazon EC2.

Overview of AWS EMR on EC2

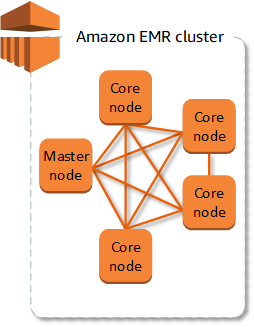

The cluster is the main component of AWS EMR. A cluster is a collection of AWS EC2 instances also known as nodes. In an EMR structure, there are three types of nodes, and in each node AWS EMR installs different software components and the role of the node depends on the type.

- Master node: This node manages all other nodes within the cluster by running software components. The master node alone can act as a single node cluster. The master node distributes data and tasks to the cluster nodes and also monitors nodes.

- Core node: A core node is a node with a software component that runs tasks and stores data in Hadoop Distributed File System.

- Task node: Node with software component which runs tasks but will not store data in HBFS.

Amazon EMR has the benefits of scalability, flexibility, security, cost saving, managing interfaces, and more. AWS EMR is good at creating and managing clusters of AWS EC2 instances running Hadoop. Hadoop Yet Another Resource Negotiator (YARN) is the resource manager who keeps track of the resources, manages resources, and dynamically allocates tasks to the resources in the cluster. Let’s dive into Hadoop YARN and its CapacityScheduler.

Apache Hadoop YARN

Hadoop YARN is a resource manager of clusters that splits functionalities of resource management, scheduling, and monitoring job scheduling and monitoring into separate components. Mainly there are three components in Hadoop YARN, it goes like,

- ResourceManager: ResourceManager is the ultimate authority that identifies and allocates resources for jobs submitted to Hadoop Yarn. This consists of,

- ApplicationManager: It is responsible for accepting job submissions, negotiating the first container for running ApplicationMaster, and helping restart ApplicationManager when a failure happens.

- Scheduler: This helps in partitioning cluster resources for submitted jobs. Scheduler purely does scheduling without monitoring, tracking, or restarting the application. Hadoop YARN provides CapacityScheduler, FairScheduler, and FifoScheduler.

- ApplicationMaster: First of all ApplicationMaster needs to connect and register itself to ResourceManager. ApplicationManager of ResourceManager negotiates the first container for running ApplicationMaster. Then ApplicationMaster negotiates other resources from the scheduler monitors and tracks the status of the resources.

- NodeManager: Containers of the YARN do the jobs specified by the ApplicationMaster. NodeMaster launches and manages the containers on the node. Node manager runs a service to check the status of the node on which it is executing. If a failure occurs NodeMaster reports it to the ResourceManager which will stop negotiating containers to the node.

Hadoop YARN on AWS EMR

Amazon EMR on AWS EC2 uses Hadoop YARN as a default resource manager for cluster management of the distributed data processing framework that has the support of Hadoop YARN. Data platforms differ from each other and have different needs. Using Amazon EMR it is possible to customize settings at cluster creation using configuration classifications, reconfigure Amazon EMR cluster applications, and also provide additional configuration classifications for each instance group in a running cluster using AWS Command Line Interface (AWS CLI), or the AWS SDK.

CapacityScheduler

CapacityScheduler is used to run Map-reduce applications in a shared, multi-tenant cluster, which will help to maximize the throughput and utilize the cluster. All organizations have their own compute resources that can meet their SLAs, but this may lead to poor utilization and difficulty in the management of multiple independent clusters per organization. Running a large Hadoop installation will solve the problem of creating private clusters. This is cost-effective for organizations when clusters are shared between organizations.

CapacityScheduler is used to share large clusters between organizations by providing a minimum capacity guarantee. And this needs strong multi-tenant clusters. Other features of CapacityScheduler are:

- Security: Each queue has strict ALCs and there are safeguards to ensure that no users can view or modify the jobs of other users.

- Operability

- Elasticity: Resources are allocated to queues beyond the capacity. And if any below capacity queue needs these resources, they are allocated for the below capacity queue after completing the pre-assigned tasks. This ensures resource availability and elasticity.

- Multi-tenancy

- Resource-based scheduling

Capacity Scheduler depends on DefaultResourceCalculator to calculate the allocation of resources and their availability to ApplicationMaster. Hadoop YARN provides two implementations:

- DefaultResourceCalculator – This is an abstract Java class in which resources are calculated based on memory.

- DominantResourceCalculator – This is also an abstract Java class that is based on the Dominant Resource Fairness (DRF) model of resource allocation. DRF calculates the share of each resource to the user. It is of two types, the user’s dominant share which is the maximum among all shares of the user, and the dominant resource known from the resource corresponding to the dominant share.

DRF makes DominantResourceCalculator a better ResourceCalculator for data processing environments running heterogeneous workloads. By default, Amazon EMR uses DominantResourceCalculator for CapacityScheduler.

Conclusion

Configuring Hadoop YARN CapacityScheduler on AWS EMR on Amazon EC2 is the best way for multi-tenant heterogeneous workloads. Here we discussed all the components from the basics. And it is easy to configure Hadoop YARN CapacityScheduler on AWS EMR as it uses Hadoop YARN by default.