As today’s world is of IoT devices and distributed applications that generate a ton of data, it is necessary to analyze those data to make them valuable. Azure Stream Analytics No-Code Editor makes this job of analytics easier. With the help of the drag-and-drop interface, you can easily create an analytics job without writing even a single line of code. Also, data ingestion, transformation, and loading tasks get easier. With No-Code Editor, you can develop and run a job that does,

- Filter and ingest data to Azure Synapse SQL

- Capture streaming data in Event Hubs in an Azure Data Lake Storage Gen2 account in Parquet format

- Materialize data in Azure Cosmos DB

- Filter and ingest to Azure Data Lake Storage Gen2

- Enrich data and ingest data to the event hub

- Transform and store data into Azure SQL database

- Filter and ingest to Azure Data Explorer

You can transform data before writing to the destination.

- Modify input schemas.

- Perform data preparation operations like joins and filters.

- Approach advanced scenarios like time-window aggregations (tumbling, hopping, and session windows) for group-by operations.

Prerequisites

Requirements needed before developing Stream Analytics jobs using No-Code Editor are:

- The Azure Event Hubs namespace and any target destination resource where you need to write must be publicly accessible and can’t be in an Azure virtual network.

- You must have the required permissions to access the streaming input and output resources.

- You must maintain permissions to create and modify Azure Stream Analytics resources.

Here we will dive through different steps for using Azure No-Code Editor.

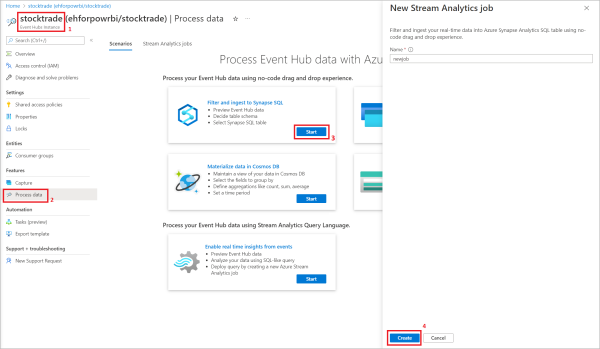

A. First, create a Stream Analytics job. For that, open an Event Hubs instance, then select Process Data, and select any template.

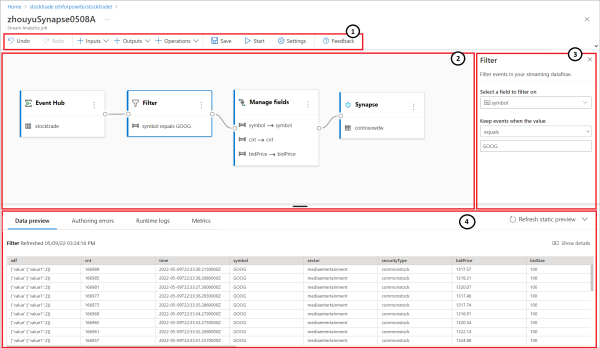

The screenshot below shows the result of the created Stream Analytics job.

- Here you can view input, output, operations, a button to save your progress, a button to start the job, settings, and feedback.

- The red line marked as 2 is where you can see the graphical representation of the Stream Analytic job from input to operations to outputs.

- The right side pane shows settings to modify the input, transformation, or output.

- Here are the tabs for data preview, authoring errors, runtime logs, and metrics. Data preview is one of the useful parts where you can see the data at every stage if you are applying transforms and operations.

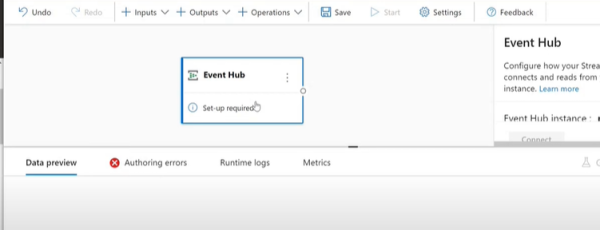

B. Configure an event hub as input for your job. For that click on the Event Hub icon,

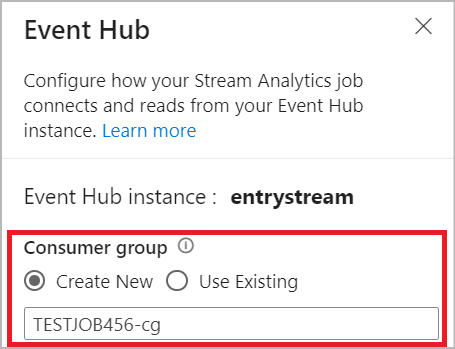

For connecting to Event Hub in No-Code Editor, create a customer group.

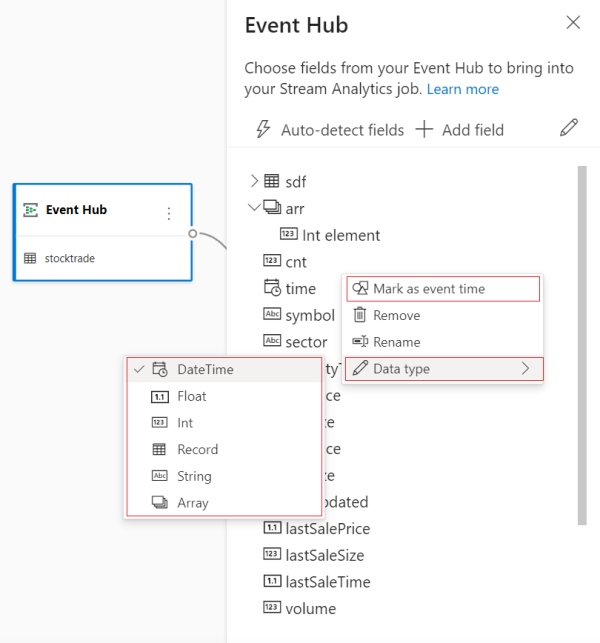

After creating a customer group click on Use Existing and select Managed Identity as the authentication mode. Now the Azure Event Hubs Data Owner role will be granted to the managed identity for the Stream Analytics job. Then click on Connect. If you know the field names, select + Add field and add the fields or click on Autodetect fields to automatically detect fields and data types based on the incoming messages. Now you can see the preview of incoming messages in the Data Preview table. Select three dots next to each field to expand, select, and edit any nested fields from the incoming messages.

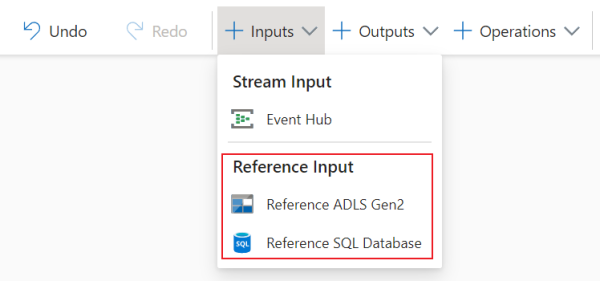

C. Click on Inputs to join data stream input to reference data. Available reference data are:

- Azure Data Lake Storage Gen2

- Azure SQL Database

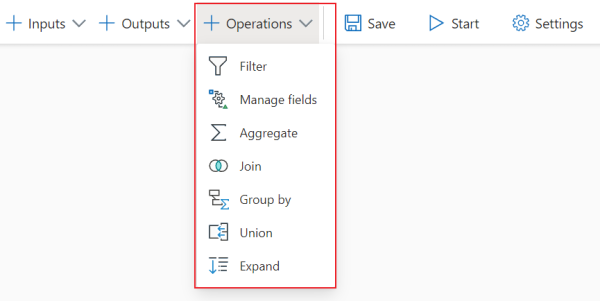

D. Select the symbols from the drop-down list of Operations to add a streaming data transformation to your job.

- Filter transformation filters events based on the value of a field in the input.

- Manage field transformation helps add, remove, or rename fields coming in from an input or another transformation.

- Aggregate transformation calculates the sum, minimum, maximum, or average every time a new event occurs over a while.

- Join transformation combines events from two inputs based on the field pairs that you are selected.

- Group by transformation calculates aggregations across all events within a certain time window.

- Union transformation connects two or more inputs to add events that have shared fields into one table.

- Expand array transformation creates a new row for each value within an array.

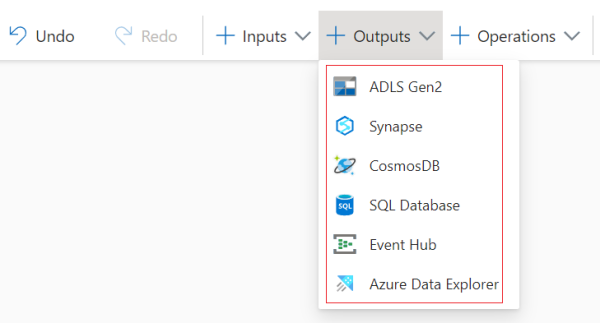

E. Under the Outputs section, you can select the different outputs for your Stream Analytics job.

- Azure Data Lake Storage Gen2: This is a cost-effective and scalable solution for storing large amounts of enterprise data. Click on ADLS Gen2 and select the container where you want to send the output of the job. Also, to eliminate the limitations of user-based authentication methods, you can select Managed Identity as the authentication mode.

- Azure Synapse Analytics: Select Synapse as the output for your Stream Analytics job and select SQL pool table to send the output of the job

- Azure Cosmos DB: This is a database service that offers a limitless elastic scale around the globe. Under CosmosDB, you can select Managed Identity as the authentication mode to get the manager role for the Stream Analytics job.

- Azure SQL Database: Azure SQL DB is a high-performance data storage layer for the applications and solutions in Azure. By selecting SQL Database, you can configure Azure Stream Analytics jobs to write the processed data to an existing table in SQL Database.

- Event Hub: By selecting Event Hub, you can transform, enrich the data and then output the data to another event hub or Azure Stream Analytics.

- Azure Data Explorer: Select Azure Data Explorer as output to analyze a high volume of data.

Conclusion

Azure Stream Analytics No-Code Editor makes your data ingestion, transformation, and loading tasks easier. You can filter, ingest, materialize, or dumb data with a single click. And you will get many components, including multiple inputs, parallel branches with various transformations, and multiple outputs. Also, you can develop and run a Stream Analytics job in a few seconds.

Do you need to create a Stream Analytic job without writing a single line of code? Metclouds Technologies is here to help you with Azure Stream Analytics no-code editor.